DeepSeek R1 and the Open-Source AI Revolution: What It Means for Enterprise

DeepSeek R1 and the Open-Source AI Revolution

On January 20, 2025, a relatively unknown Chinese AI lab called DeepSeek released R1 — a 671-billion parameter reasoning model under the MIT License. Within days, it toppled ChatGPT as the #1 free app on the iOS App Store, wiped $600 billion off Nvidia's market cap in a single trading session, and forced every enterprise AI team to rethink their strategy.

This wasn't just another model launch. It was a turning point.

The AI cost curve just broke. Open-source models now match proprietary performance at 5–10% of the price — and enterprises are paying attention.

Why DeepSeek R1 Matters

DeepSeek R1 isn't noteworthy because it's "another open-source model." It matters because it proved three things simultaneously:

- Performance parity is real. R1 matches or exceeds OpenAI's O1 on math, coding, and general reasoning benchmarks — the first open-source model to do so convincingly.

- Cost efficiency is transformative. Training and inference costs are a fraction of comparable proprietary models, with API pricing roughly 90% cheaper than OpenAI equivalents.

- Open-source is enterprise-ready. The MIT license means full commercial use, modification, on-premises deployment, and zero vendor lock-in.

The Model Family: Right-Sizing AI for Your Workload

One of R1's most practical innovations is its distilled model family — ranging from 1.5B to 671B parameters. This means enterprises can match model size to workload:

| Model Size | Parameters | Best For |

|---|---|---|

| R1-Distill-Qwen-1.5B | 1.5B | Edge devices, simple classification, lightweight chat |

| R1-Distill-Qwen-7B | 7B | Internal tools, document summarization, FAQ bots |

| R1-Distill-Qwen-32B | 32B | RAG pipelines, code generation, complex analysis |

| R1 (Full) | 671B (MoE) | Enterprise reasoning, multi-step planning, research |

This "right-sizing" approach is a game-changer for enterprises managing compute budgets. Instead of paying for a 175B+ parameter model to answer simple questions, you deploy a 7B model locally at near-zero marginal cost.

What This Means for Enterprise AI Strategy

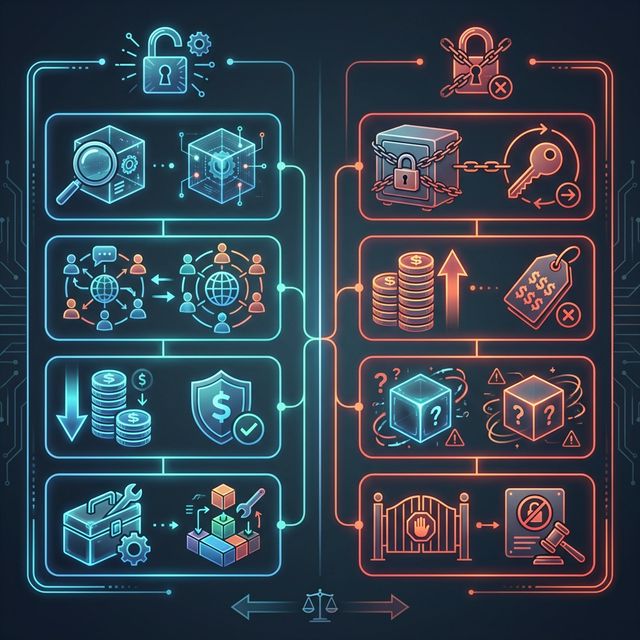

1. The End of Vendor Lock-In

With R1's MIT license, enterprises can:

- Run models on-premises — critical for regulated industries like healthcare and finance

- Fine-tune on proprietary data — without sharing sensitive information with third-party APIs

- Audit the model — full transparency into weights, architecture, and behavior

- Eliminate recurring API costs — pay for compute, not per-token pricing

2. The Multi-Model Future

The smartest enterprise AI strategies in 2025 aren't betting on a single provider. They're building model-agnostic architectures that can swap between DeepSeek, Claude, GPT, Gemini, and Llama based on task, cost, and performance requirements.

3. The Geopolitical Dimension

DeepSeek's Chinese origin raises legitimate questions about data sovereignty and supply chain risk. Enterprises should:

- Deploy open-source models on their own infrastructure

- Conduct independent security audits

- Maintain multi-provider flexibility as a hedge

The Bigger Picture: Open-Source Is Winning

DeepSeek R1 is the most dramatic example, but it's part of a larger wave:

- Meta's Llama 3 continues to push open-source boundaries

- Mistral's Mixtral models offer strong European alternatives

- Google's Gemma provides compact, high-quality open models

- Microsoft now hosts DeepSeek R1 on Azure AI Foundry

The message is clear: the open-source AI ecosystem has reached critical mass. Enterprises that build on proprietary-only stacks risk being locked into yesterday's pricing and yesterday's capabilities.

How Spring Software Can Help

Navigating the open-source AI landscape requires more than just downloading a model. At Spring Software, we help enterprises:

- Evaluate and benchmark open-source models against their specific use cases

- Build model-agnostic architectures that prevent vendor lock-in

- Deploy and fine-tune models on private infrastructure

- Implement governance frameworks for responsible AI adoption

The open-source AI revolution isn't coming — it's here. The question is whether your enterprise is positioned to benefit from it.

Talk to our team about building your open-source AI strategy.

Related Posts

AI Consultancy vs. Hiring In-House: The Build vs. Buy Decision in 2026

Should you hire an AI team or partner with consultants? A framework for making the right build-vs-buy decision for AI capabilities in 2026.

AI Agents in iGaming: Transforming Player Experience

How online casinos and sportsbooks are using AI agents to revolutionize customer engagement and fraud detection.

AI Governance in 2025: Navigating the EU AI Act and Enterprise Compliance

The EU AI Act took effect in February 2025 with fines up to €35 million. Here is your enterprise guide to AI governance frameworks, risk classification, and building a compliance-first AI strategy.